Deepfake Abuse Against Women Surges 1000%

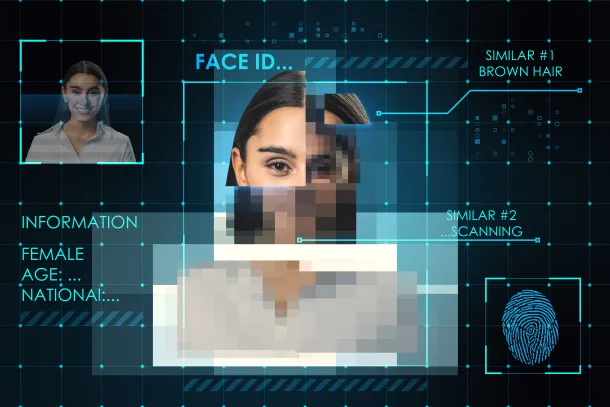

The misuse of artificial intelligence is accelerating worldwide with deepfake technology increasingly targeting women.Recent findings show that nearly 93% of deepfake victims are women, while deepfake content targeting women has surged by more than 1000% in recent years, highlighting a growing digital safety crisis.

What was once considered a fringe technological threat has now evolved into a widespread challenge for online safety. In India, cybercrime complaints involving women have increased sharply—from around 50,000 in 2024 to nearly 80,000 by 2026, representing a 60% rise within two years.

Deepfake pornography continues to be the most common form of abuse. The report indicates that about 98% of deepfake pornographic content targets women, often created using face-swapping applications and automated bot networks that manipulate publicly available images.

Victims in India are largely between the ages of 18 and 30, including students and young professionals, although cases involving school-aged girls are also increasing. The trend reflects how easily digital images shared online can be manipulated using widely available AI tools.

City-level data shows that cybercrime complaints are concentrated in major urban centers. Bengaluru accounts for nearly 30% of cases, followed by Hyderabad at around 14% and Mumbai at about 13%, while Chennai and Kolkata contribute roughly 5% each, and Delhi accounts for nearly 3%.

The psychological and social consequences are significant. Globally, 62% of women affected by deepfake abuse do not report incidents due to stigma and fear of reputational damage. In India, more than one-third of women experiencing online harassment take no legal action, and many reduce their digital presence after facing such abuse.

Dr. Deepak Kumar Sahu, Founder & CEO of FaceOff Technologies, said, “Deepfake technology has transformed digital fraud into a powerful weapon that can damage reputations and identities within minutes. As generative AI becomes more accessible, protecting digital identity must become a top priority. Advanced detection technologies, stronger verification systems, and public awareness are essential to ensure that artificial intelligence is used responsibly and that individuals feel secure in the digital world.”

Experts say reducing digital exposure and adopting deepfake detection tools are practical steps individuals can take to limit identity misuse. Addressing the growing threat of synthetic media will require coordinated efforts from technology providers, platforms, regulators, and society to safeguard digital trust.