For years, artificial intelligence evolved on a simple belief: bigger models and more data would deliver better outcomes. That logic worked for general tasks, but in sectors like banking and public infrastructure, trust matters more than raw intelligence. Systems must explain decisions, follow rules, and withstand fraud.

FaceOff introduces a different approach through an industry specific Small Language Model built for identity intelligence. It is not a chatbot but a decision system designed to verify identities and detect fraud with precision.

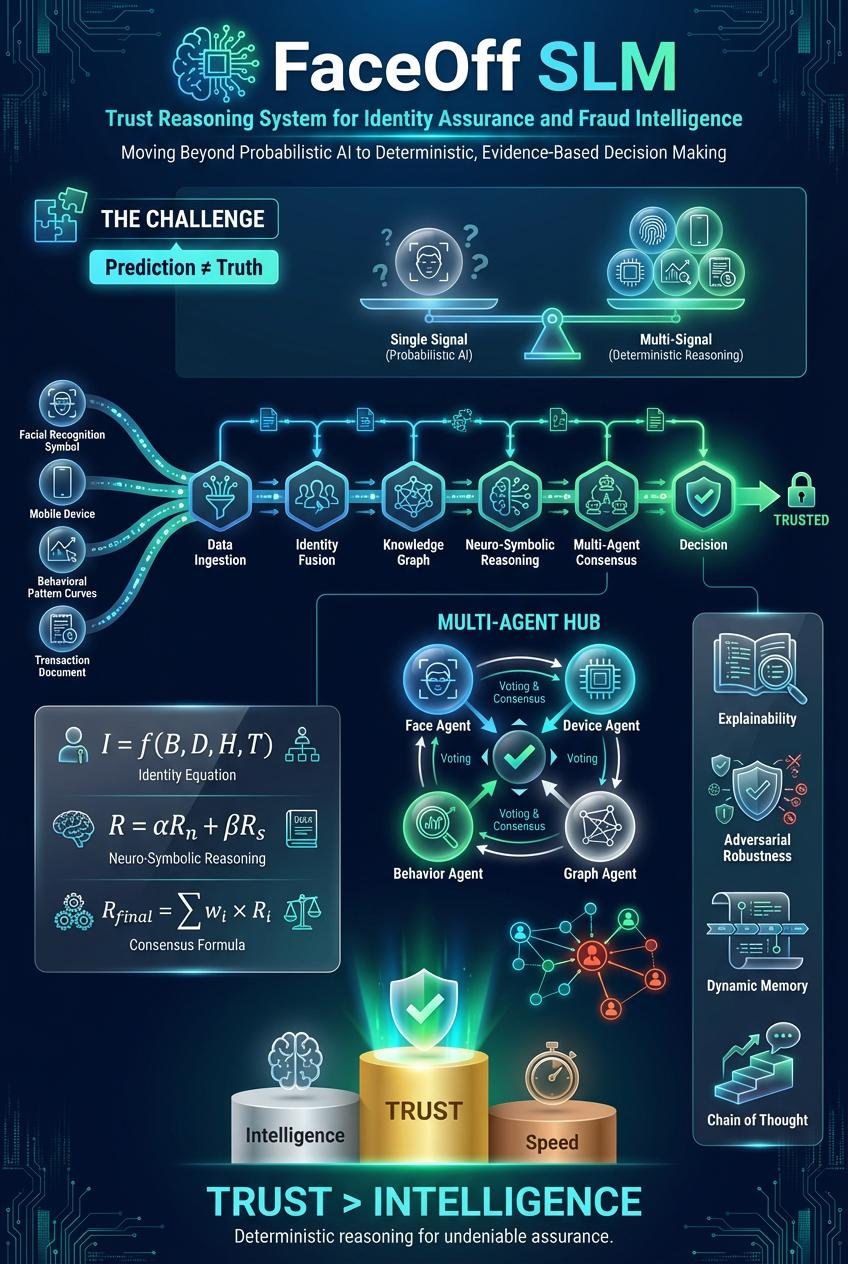

Instead of relying only on text patterns, FaceOff combines biometric inputs, device signals, behavioral data, and transaction context into a unified identity view. This allows it to detect risks that appear only when multiple signals are analysed together.

Its neuro symbolic design blends pattern recognition with rule based logic. This ensures decisions are both accurate and compliant with real world policies. A knowledge graph further enhances context by linking users, devices, and transactions to uncover hidden fraud patterns.

FaceOff also uses multiple AI agents that independently assess risk before arriving at a final decision. This layered approach improves reliability and reduces bias.

Most importantly, it offers full transparency. Every decision is explainable, auditable, and backed by evidence. In a world of rising digital fraud, FaceOff shifts AI from probability to certainty, redefining trust in critical systems.